A Serverless CI/CD Pipeline for SAM applications

Previous blog posts described how simple Serverless applications can be easily build using either Node.js and Java. These applications are build in such a way that it is possible to build and deploy them to the cloud using simple commands. This is the starting point for automating this process to achieve full automation with a CI/CD pipeline using managed services provided by AWS.

Prerequisites

In my recent projects I’ve settled with using a toolchain composed of GitHub for hosting my source code and various services provided by AWS to automate my workflows. For example, publishing content to this blog is completely automated once I push code to a certain GitHub repository. I described the build pipeline in a previous blog post. This provides the foundation for doing the same stuff for serverless applications.

The Goal

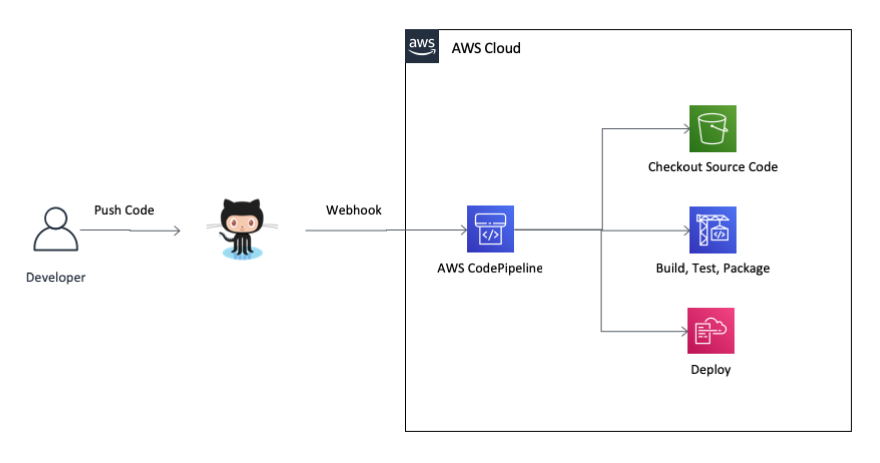

Everytime someone pushes code to the repository hosted in GitHub it should be checked out, build and tested automatically. This process is called Continuous Integration. Furthermore, the code should also be deployed automatically to a development environment to achieve Continuous Deployment.

Depending on the kind of application and the effort one wants to take more steps can be added after deploying to the development environment. For example, in one of our projects integration tests are run against the code that has been automatically deployed to the development environment. Furthermore, if no errors occur during build, unit testing, deployment and integration testing the code can be deployed to the next environment after manual approval. In this case deployments to the other environments are done in a Continuous Delivery fashion.

The Pipeline

The CI/CD pipeline is comprised of the services AWS CodePipeline and AWS CodeBuild. The deployment itself is performed by launching or updating a CloudFormation stack.

For setting up the pipeline I’ve created a CloudFormation template. Based on this template it’s easy to launch a new stack in any AWS account and region.

In order for CodePipeline to be able to access the GitHub repository it is necessary to configure a Personal Access Token in GitHub. How one is created is described in the GitHub documentation. The template expects this token to be stored with the Parameter Store of the AWS Systems Manager. To store the token to the Parameter Store the following command can be used:

aws ssm put-parameter --name /github/personal_access_token --value TOKEN --type String

The template itself has a small set of parameters to specify the information about the GitHub repository, like repository owner, name and branch to build. By default the pipeline is configured to run everytime a change is pushed to the master branch. Furthermore, the runtime of the project can be configured. Currently, Java 8, Node.js 8.11, Python 2.7 and 3.6 and Ruby 2.5 are supported. For setting up the CI/CD pipeline for the sample project used in a previous blog post the following command can be used:

aws cloudformation create-stack --stack-name my-app-pipeline \

--template-url https://s3.eu-central-1.amazonaws.com/com.carpinuslabs.cloudformation.templates/ci-cd/sam-build-pipeline.yaml \

--parameters ParameterKey=RepositoryOwner,ParameterValue=jenseickmeyer \

ParameterKey=RepositoryName,ParameterValue=todo-app-nodejs \

ParameterKey=BuildEnvironment,ParameterValue=nodejs8.11 \

--capabilities=CAPABILITY_IAM

It just takes a couple of minutes until the pipeline is setup and configured. The pipeline will immediately start to check out the code from the repository and build the project.

Build Configuration

To prepare your project to be build it must contain a configuration for CodeBuild, the service which is used by the pipeline for actually building the project. Depending on the runtime used for the serverless application the actual configuration differs. Here’s a simple buildspec.yaml file for an application based on Node.js:

version: 0.2

phases:

pre_build:

commands:

- cd src

# Install the dependencies

- npm install

build:

commands:

# Run tests

- npm test

# Remove all dependencies not relevant for production

- npm prune --production

- cd ..

post_build:

commands:

# Create and upload a deployment package

- aws cloudformation package --template-file sam-template.yaml --s3-bucket $S3_BUCKET --output-template-file sam-template-output.yaml

artifacts:

files:

- sam-template-output.yaml

Basically, the different phases contain the commands that should be run. In the build specification outlined above all the dependencies are installed, the tests are run and the non-production dependencies are removed to reduce the package size. At the end the AWS CLI is used to package up the application and upload this deployment package to a S3 bucket. This S3 bucket is provided as an environment variable to CodeBuild. It is configured via CloudFormation during stack creation.

The package command generates a CloudFormation template based on the SAM template contained in the project. The pipeline is configured to expect a file called sam-template-output.yaml in the deployment stage. Therefore, the package command must be configured to create this file and the buildspec.yaml must be provide this file as the output of the build stage.

See the CodeBuild documentation for more information about what can be configured.

Advantages & Disadvantages

Based on the template a new CI/CD pipeline for a new serverless application can be set up in minutes. Since it only makes use of managed services provided by AWS there’s basically no need to worry about managing servers or thinking about scalability or availability.

Additionally, as with many managed services provided by public cloud vendors, the costs for using these services scale with the amount of usage. This means that running the pipeline or building code only incur costs if they are actually used. And even in this case the costs are pretty low when compared to setting up and managing your own infrastructure for the CI/CD pipeline.

Nevertheless, there are downsides when using these services which should not be unmentioned. First of all, the execution of the pipeline seems to be a little slower when compared to other products in this field. Furthermore, other products, like Jenkins, GitLab or Azure DevOps provide integrations with many other tools that can be used out of the box. With AWS CodePipeline it’s up to the developer to build such integrations. For example, this starts with such simple things as sending notifications about failed builds via email, Slack, Teams, etc.

Summary

Setting up the CI/CD pipeline based on the template provided is easy and straight-forward. In my opinion the benefits - especially the managed service and the pricing model - out-weight the cons. Additionally, it’s fun to implement custom extensions.